Hi there! I am a Ph.D. student in APEX Lab at Simon Fraser University, supervised by Ke Li. Prior to that, I received my Bachelor's degree in computer science from University of Science and Technology of China.

My research focuses on neural rendering and its applications.

Email / Google Scholar / Twitter / Github

Yanshu Zhang, Chirag Vashist, Shichong Peng, Ke Li

3DV, 2026

Project Page / Paper /

@inproceedings{zhang2026paprupclose,

title={PAPR Up-close: Close-up Neural Point Rendering without Holes},

author={Yanshu Zhang and Chirag Vashist and Shichong Peng and Ke Li},

booktitle={International Conference on 3D Vision},

year={2026}

}

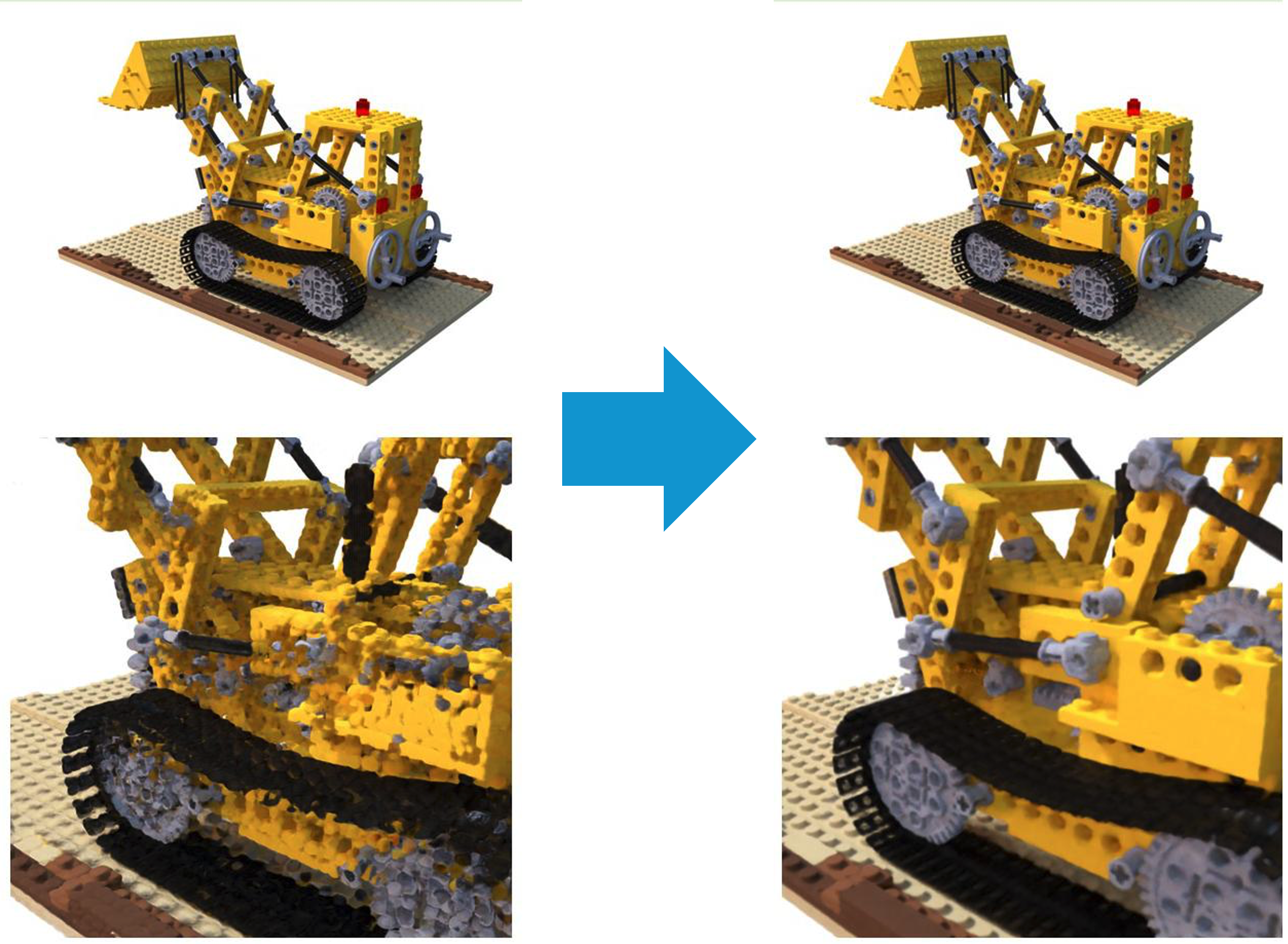

TL;DRHole-free close-up neural point rendering

We extend PAPR for robust close-up neural point rendering and significantly

reduce holes and artifacts while preserving fine details.

Mehran Aghabozorgi, Alireza Moazeni, Yanshu Zhang, Ke Li

ICLR, 2026

Project Page / Code / arXiv /

@inproceedings{aghabozorgi2026wimle,

title={{WIMLE}: Uncertainty-Aware World Models with {IMLE} for Sample-Efficient Continuous Control},

author={Mehran Aghabozorgi and Alireza Moazeni and Yanshu Zhang and Ke Li},

booktitle={The Fourteenth International Conference on Learning Representations},

year={2026}

}

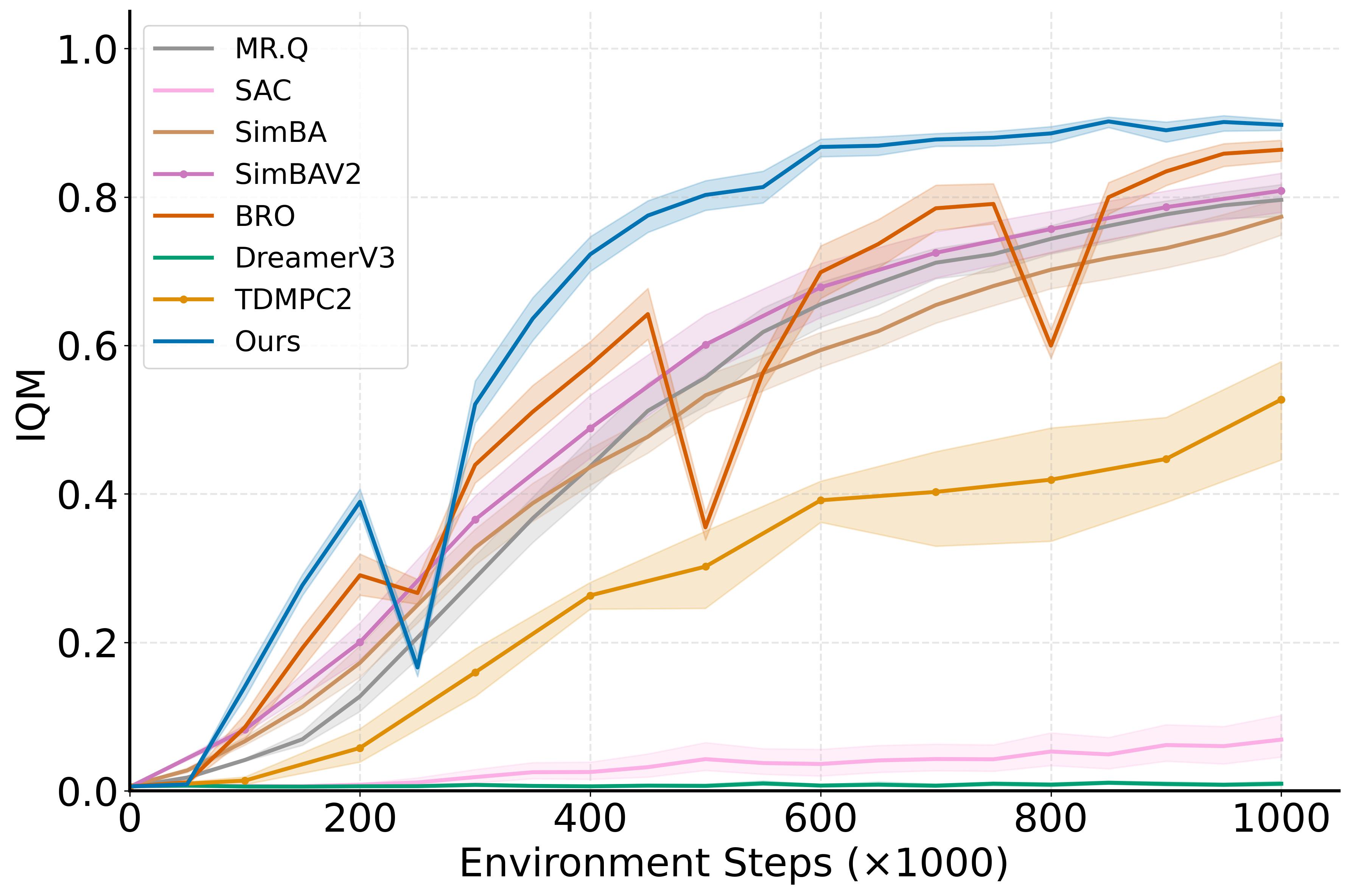

TL;DRMulti-modal world models with uncertainty-aware policy learning for model-based RL

WIMLE learns stochastic, multi-modal world models using IMLE and weights synthetic

transitions by predictive confidence, achieving state-of-the-art sample efficiency

across 40 continuous-control tasks in DeepMind Control, HumanoidBench, and MyoSuite.

Shichong Peng, Yanshu Zhang, Ke Li

CVPR, 2024 (Highlight 🌟)

Project Page / Code / arXiv / Video /

@inproceedings{peng2024papr,

title={PAPR in Motion: Seamless Point-level 3D Scene Interpolation},

author={Shichong Peng and Yanshu Zhang and Ke Li},

booktitle={IEEE/CVF Conference on Computer Vision and Pattern Recognition},

year={2024}

}

TL;DRSeamless point-level 4D motion interpolation

We introduce the novel problem of point-level 3D scene interpolation.

Given observations of a scene at two distinct states from multiple views,

the goal is to synthesize a smooth point-level interpolation between them,

without any intermediate supervision. Our method, PAPR in Motion, builds

upon Proximity Attention Point Rendering (PAPR) technique, and generates

seamless interpolations of both the scene geometry and appearance.

Alireza Moazeni, Shichong Peng, Yanshu Zhang, Chirag Vashist, Ke Li

arXiv, 2024

arXiv /

@article{moazeni2024intrinsic,

title={Intrinsic PAPR for Point-level 3D Scene Albedo and Shading Editing},

author={Alireza Moazeni and Shichong Peng and Ke Li},

journal={arXiv preprint arXiv:2407.00500},

year={2024}

}

TL;DRPoint-level 3D albedo and shading editing via intrinsic decomposition

Intrinsic PAPR decomposes a 3D scene into albedo and shading components using

point-based neural rendering, enabling detailed point-level editing that remains

consistent across viewpoints without relying on complex shading models or simplistic priors.

Yanshu Zhang*, Shichong Peng*, Alireza Moazeni, Ke Li

NeurIPS, 2023 (Spotlight 🌟)

Project Page / Code / arXiv / Video /

@inproceedings{zhang2023papr,

title={PAPR: Proximity Attention Point Rendering},

author={Yanshu Zhang and Shichong Peng and Alireza Moazeni and Ke Li},

booktitle={Thirty-seventh Conference on Neural Information Processing Systems},

year={2023}

}

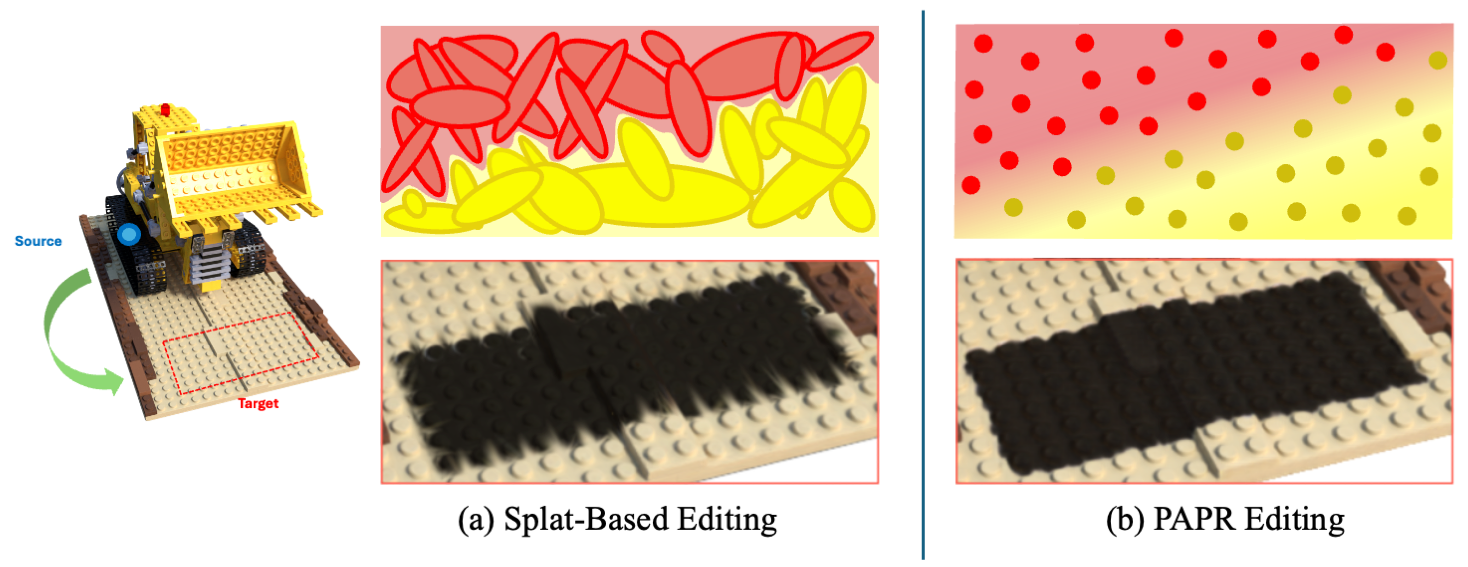

TL;DRReconstruct and render point clouds using attention

PAPR is a point-based surface representation that uses proximity attention to

interpolate between nearby points to rays for rendering high-quality images,

enabling non-volume-preserving geometry deformation by directly adjusting point

positions, and, unlike 3D Gaussian Splatting, it avoids creating holes while

preserving texture details after deformation.

|

Template adapted from Qianli Ma's websites. |